Configuration

7. Maven Resources

Spring Cloud Dataflow supports referencing artifacts via Maven (maven:).

If you want to override specific Maven configuration properties (remote repositories, proxies, and others) or run the Data Flow Server behind a proxy,

you need to specify those properties as command-line arguments when you start the Data Flow Server, as shown in the following example:

$ java -jar spring-cloud-dataflow-server-2.10.3.jar --spring.config.additional-location=/home/joe/maven.ymlThe preceding command assumes a maven.yaml similar to the following:

maven:

localRepository: mylocal

remote-repositories:

repo1:

url: https://repo1

auth:

username: user1

password: pass1

snapshot-policy:

update-policy: daily

checksum-policy: warn

release-policy:

update-policy: never

checksum-policy: fail

repo2:

url: https://repo2

policy:

update-policy: always

checksum-policy: fail

proxy:

host: proxy1

port: "9010"

auth:

username: proxyuser1

password: proxypass1By default, the protocol is set to http. You can omit the auth properties if the proxy does not need a username and password. Also, by default, the maven localRepository is set to ${user.home}/.m2/repository/.

As shown in the preceding example, you can specify the remote repositories along with their authentication (if needed). If the remote repositories are behind a proxy, you can specify the proxy properties, as shown in the preceding example.

You can specify the repository policies for each remote repository configuration, as shown in the preceding example.

The key policy is applicable to both the snapshot and the release repository policies.

See the Repository Policies topic for the list of supported repository policies.

As these are Spring Boot @ConfigurationProperties you need to specify by adding them to the SPRING_APPLICATION_JSON environment variable. The following example shows how the JSON is structured:

$ SPRING_APPLICATION_JSON='

{

"maven": {

"local-repository": null,

"remote-repositories": {

"repo1": {

"url": "https://repo1",

"auth": {

"username": "repo1user",

"password": "repo1pass"

}

},

"repo2": {

"url": "https://repo2"

}

},

"proxy": {

"host": "proxyhost",

"port": 9018,

"auth": {

"username": "proxyuser",

"password": "proxypass"

}

}

}

}

'7.1. Wagon

There is a limited support for using Wagon transport with Maven. Currently, this

exists to support preemptive authentication with http-based repositories

and needs to be enabled manually.

Wagon-based http transport is enabled by setting the maven.use-wagon property

to true. Then you can enable preemptive authentication for each remote

repository. Configuration loosely follows the similar patterns found in

HttpClient HTTP Wagon.

At the time of this writing, documentation in Maven’s own site is slightly misleading

and missing most of the possible configuration options.

The maven.remote-repositories.<repo>.wagon.http namespace contains all Wagon

http related settings, and the keys directly under it map to supported http methods — namely, all, put, get and head, as in Maven’s own configuration.

Under these method configurations, you can then set various options, such as

use-preemptive. A simpl preemptive configuration to send an auth

header with all requests to a specified remote repository would look like the following example:

maven:

use-wagon: true

remote-repositories:

springRepo:

url: https://repo.example.org

wagon:

http:

all:

use-preemptive: true

auth:

username: user

password: passwordInstead of configuring all methods, you can tune settings for get

and head requests only, as follows:

maven:

use-wagon: true

remote-repositories:

springRepo:

url: https://repo.example.org

wagon:

http:

get:

use-preemptive: true

head:

use-preemptive: true

use-default-headers: true

connection-timeout: 1000

read-timeout: 1000

headers:

sample1: sample2

params:

http.socket.timeout: 1000

http.connection.stalecheck: true

auth:

username: user

password: passwordThere are settings for use-default-headers, connection-timeout,

read-timeout, request headers, and HttpClient params. For more about parameters,

see Wagon ConfigurationUtils.

8. Security

By default, the Data Flow server is unsecured and runs on an unencrypted HTTP connection. You can secure your REST endpoints as well as the Data Flow Dashboard by enabling HTTPS and requiring clients to authenticate with OAuth 2.0.

|

Appendix Azure contains more information how to setup Azure Active Directory integration. |

|

By default, the REST endpoints (administration, management, and health) as well as the Dashboard UI do not require authenticated access. |

While you can theoretically choose any OAuth provider in conjunction with Spring Cloud Data Flow, we recommend using the CloudFoundry User Account and Authentication (UAA) Server.

Not only is the UAA OpenID certified and is used by Cloud Foundry, but you can also use it in local stand-alone deployment scenarios. Furthermore, the UAA not only provides its own user store, but it also provides comprehensive LDAP integration.

8.1. Enabling HTTPS

By default, the dashboard, management, and health endpoints use HTTP as a transport.

You can switch to HTTPS by adding a certificate to your configuration in

application.yml, as shown in the following example:

server:

port: 8443 (1)

ssl:

key-alias: yourKeyAlias (2)

key-store: path/to/keystore (3)

key-store-password: yourKeyStorePassword (4)

key-password: yourKeyPassword (5)

trust-store: path/to/trust-store (6)

trust-store-password: yourTrustStorePassword (7)| 1 | As the default port is 9393, you may choose to change the port to a more common HTTPs-typical port. |

| 2 | The alias (or name) under which the key is stored in the keystore. |

| 3 | The path to the keystore file. You can also specify classpath resources, by using the classpath prefix - for example: classpath:path/to/keystore. |

| 4 | The password of the keystore. |

| 5 | The password of the key. |

| 6 | The path to the truststore file. You can also specify classpath resources, by using the classpath prefix - for example: classpath:path/to/trust-store |

| 7 | The password of the trust store. |

| If HTTPS is enabled, it completely replaces HTTP as the protocol over which the REST endpoints and the Data Flow Dashboard interact. Plain HTTP requests fail. Therefore, make sure that you configure your Shell accordingly. |

Using Self-Signed Certificates

For testing purposes or during development, it might be convenient to create self-signed certificates. To get started, execute the following command to create a certificate:

$ keytool -genkey -alias dataflow -keyalg RSA -keystore dataflow.keystore \

-validity 3650 -storetype JKS \

-dname "CN=localhost, OU=Spring, O=Pivotal, L=Kailua-Kona, ST=HI, C=US" (1)

-keypass dataflow -storepass dataflow| 1 | CN is the important parameter here. It should match the domain you are trying to access - for example, localhost. |

Then add the following lines to your application.yml file:

server:

port: 8443

ssl:

enabled: true

key-alias: dataflow

key-store: "/your/path/to/dataflow.keystore"

key-store-type: jks

key-store-password: dataflow

key-password: dataflowThis is all you need to do for the Data Flow Server. Once you start the server,

you should be able to access it at localhost:8443/.

As this is a self-signed certificate, you should hit a warning in your browser, which

you need to ignore.

| Never use self-signed certificates in production. |

Self-Signed Certificates and the Shell

By default, self-signed certificates are an issue for the shell, and additional steps are necessary to make the shell work with self-signed certificates. Two options are available:

-

Add the self-signed certificate to the JVM truststore.

-

Skip certificate validation.

Adding the Self-signed Certificate to the JVM Truststore

In order to use the JVM truststore option, you need to export the previously created certificate from the keystore, as follows:

$ keytool -export -alias dataflow -keystore dataflow.keystore -file dataflow_cert -storepass dataflowNext, you need to create a truststore that the shell can use, as follows:

$ keytool -importcert -keystore dataflow.truststore -alias dataflow -storepass dataflow -file dataflow_cert -nopromptNow you are ready to launch the Data Flow Shell with the following JVM arguments:

$ java -Djavax.net.ssl.trustStorePassword=dataflow \

-Djavax.net.ssl.trustStore=/path/to/dataflow.truststore \

-Djavax.net.ssl.trustStoreType=jks \

-jar spring-cloud-dataflow-shell-2.10.3.jar|

If you run into trouble establishing a connection over SSL, you can enable additional

logging by using and setting the |

Do not forget to target the Data Flow Server with the following command:

dataflow:> dataflow config server --uri https://localhost:8443/Skipping Certificate Validation

Alternatively, you can also bypass the certification validation by providing the

optional --dataflow.skip-ssl-validation=true command-line parameter.

If you set this command-line parameter, the shell accepts any (self-signed) SSL certificate.

|

If possible, you should avoid using this option. Disabling the trust manager defeats the purpose of SSL and makes your application vulnerable to man-in-the-middle attacks. |

8.2. Authentication by using OAuth 2.0

To support authentication and authorization, Spring Cloud Data Flow uses OAuth 2.0. It lets you integrate Spring Cloud Data Flow into Single Sign On (SSO) environments.

| As of Spring Cloud Data Flow 2.0, OAuth2 is the only mechanism for providing authentication and authorization. |

The following OAuth2 Grant Types are used:

-

Authorization Code: Used for the GUI (browser) integration. Visitors are redirected to your OAuth Service for authentication

-

Password: Used by the shell (and the REST integration), so visitors can log in with username and password

-

Client Credentials: Retrieves an access token directly from your OAuth provider and passes it to the Data Flow server by using the Authorization HTTP header

| Currently, Spring Cloud Data Flow uses opaque tokens and not transparent tokens (JWT). |

You can access the REST endpoints in two ways:

-

Basic authentication, which uses the Password Grant Type to authenticate with your OAuth2 service

-

Access token, which uses the Client Credentials Grant Type

| When you set up authentication, you really should enable HTTPS as well, especially in production environments. |

You can turn on OAuth2 authentication by adding the following to application.yml or by setting

environment variables. The following example shows the minimal setup needed for

CloudFoundry User Account and Authentication (UAA) Server:

spring:

security:

oauth2: (1)

client:

registration:

uaa: (2)

client-id: myclient

client-secret: mysecret

redirect-uri: '{baseUrl}/login/oauth2/code/{registrationId}'

authorization-grant-type: authorization_code

scope:

- openid (3)

provider:

uaa:

jwk-set-uri: http://uaa.local:8080/uaa/token_keys

token-uri: http://uaa.local:8080/uaa/oauth/token

user-info-uri: http://uaa.local:8080/uaa/userinfo (4)

user-name-attribute: user_name (5)

authorization-uri: http://uaa.local:8080/uaa/oauth/authorize

resourceserver:

opaquetoken:

introspection-uri: http://uaa.local:8080/uaa/introspect (6)

client-id: dataflow

client-secret: dataflow| 1 | Providing this property activates OAuth2 security. |

| 2 | The provider ID. You can specify more than one provider. |

| 3 | As the UAA is an OpenID provider, you must at least specify the openid scope.

If your provider also provides additional scopes to control the role assignments,

you must specify those scopes here as well. |

| 4 | OpenID endpoint. Used to retrieve user information such as the username. Mandatory. |

| 5 | The JSON property of the response that contains the username. |

| 6 | Used to introspect and validate a directly passed-in token. Mandatory. |

You can verify that basic authentication is working properly by using curl, as follows:

curl -u myusername:mypassword http://localhost:9393/ -H 'Accept: application/json'As a result, you should see a list of available REST endpoints.

When you access the Root URL with a web browser and

security enabled, you are redirected to the Dashboard UI. To see the

list of REST endpoints, specify the application/json Accept header. Also be sure

to add the Accept header by using tools such as

Postman (Chrome)

or RESTClient (Firefox).

|

Besides Basic Authentication, you can also provide an access token, to access the REST API. To do so, retrieve an OAuth2 Access Token from your OAuth2 provider and pass that access token to the REST Api by using the Authorization HTTP header, as follows:

$ curl -H "Authorization: Bearer <ACCESS_TOKEN>" http://localhost:9393/ -H 'Accept: application/json'8.3. Customizing Authorization

The preceding content mostly deals with authentication — that is, how to assess the identity of the user. In this section, we discuss the available authorization options — that is, who can do what.

The authorization rules are defined in dataflow-server-defaults.yml (part of

the Spring Cloud Data Flow Core module).

Because the determination of security roles is environment-specific,

Spring Cloud Data Flow, by default, assigns all roles to authenticated OAuth2

users. The DefaultDataflowAuthoritiesExtractor class is used for that purpose.

Alternatively, you can have Spring Cloud Data Flow map OAuth2 scopes to Data Flow roles by

setting the boolean property map-oauth-scopes for your provider to true (the default is false).

For example, if your provider’s ID is uaa, the property would be

spring.cloud.dataflow.security.authorization.provider-role-mappings.uaa.map-oauth-scopes.

For more details, see the chapter on [configuration-security-role-mapping].

You can also customize the role-mapping behavior by providing your own Spring bean definition that

extends Spring Cloud Data Flow’s AuthorityMapper interface. In that case,

the custom bean definition takes precedence over the default one provided by

Spring Cloud Data Flow.

The default scheme uses seven roles to protect the REST endpoints that Spring Cloud Data Flow exposes:

-

ROLE_CREATE: For anything that involves creating, such as creating streams or tasks

-

ROLE_DEPLOY: For deploying streams or launching tasks

-

ROLE_DESTROY: For anything that involves deleting streams, tasks, and so on.

-

ROLE_MANAGE: For Boot management endpoints

-

ROLE_MODIFY: For anything that involves mutating the state of the system

-

ROLE_SCHEDULE: For scheduling related operation (such as scheduling a task)

-

ROLE_VIEW: For anything that relates to retrieving state

As mentioned earlier in this section, all authorization-related default settings are specified

in dataflow-server-defaults.yml, which is part of the Spring Cloud Data Flow Core

Module. Nonetheless, you can override those settings, if desired — for example,

in application.yml. The configuration takes the form of a YAML list (as some

rules may have precedence over others). Consequently, you need to copy and paste

the whole list and tailor it to your needs (as there is no way to merge lists).

Always refer to your version of the application.yml file, as the following snippet may be outdated.

|

The default rules are as follows:

spring:

cloud:

dataflow:

security:

authorization:

enabled: true

loginUrl: "/"

permit-all-paths: "/authenticate,/security/info,/assets/**,/dashboard/logout-success-oauth.html,/favicon.ico"

rules:

# About

- GET /about => hasRole('ROLE_VIEW')

# Audit

- GET /audit-records => hasRole('ROLE_VIEW')

- GET /audit-records/** => hasRole('ROLE_VIEW')

# Boot Endpoints

- GET /management/** => hasRole('ROLE_MANAGE')

# Apps

- GET /apps => hasRole('ROLE_VIEW')

- GET /apps/** => hasRole('ROLE_VIEW')

- DELETE /apps/** => hasRole('ROLE_DESTROY')

- POST /apps => hasRole('ROLE_CREATE')

- POST /apps/** => hasRole('ROLE_CREATE')

- PUT /apps/** => hasRole('ROLE_MODIFY')

# Completions

- GET /completions/** => hasRole('ROLE_VIEW')

# Job Executions & Batch Job Execution Steps && Job Step Execution Progress

- GET /jobs/executions => hasRole('ROLE_VIEW')

- PUT /jobs/executions/** => hasRole('ROLE_MODIFY')

- GET /jobs/executions/** => hasRole('ROLE_VIEW')

- GET /jobs/thinexecutions => hasRole('ROLE_VIEW')

# Batch Job Instances

- GET /jobs/instances => hasRole('ROLE_VIEW')

- GET /jobs/instances/* => hasRole('ROLE_VIEW')

# Running Applications

- GET /runtime/streams => hasRole('ROLE_VIEW')

- GET /runtime/streams/** => hasRole('ROLE_VIEW')

- GET /runtime/apps => hasRole('ROLE_VIEW')

- GET /runtime/apps/** => hasRole('ROLE_VIEW')

# Stream Definitions

- GET /streams/definitions => hasRole('ROLE_VIEW')

- GET /streams/definitions/* => hasRole('ROLE_VIEW')

- GET /streams/definitions/*/related => hasRole('ROLE_VIEW')

- POST /streams/definitions => hasRole('ROLE_CREATE')

- DELETE /streams/definitions/* => hasRole('ROLE_DESTROY')

- DELETE /streams/definitions => hasRole('ROLE_DESTROY')

# Stream Deployments

- DELETE /streams/deployments/* => hasRole('ROLE_DEPLOY')

- DELETE /streams/deployments => hasRole('ROLE_DEPLOY')

- POST /streams/deployments/** => hasRole('ROLE_MODIFY')

- GET /streams/deployments/** => hasRole('ROLE_VIEW')

# Stream Validations

- GET /streams/validation/ => hasRole('ROLE_VIEW')

- GET /streams/validation/* => hasRole('ROLE_VIEW')

# Stream Logs

- GET /streams/logs/* => hasRole('ROLE_VIEW')

# Task Definitions

- POST /tasks/definitions => hasRole('ROLE_CREATE')

- DELETE /tasks/definitions/* => hasRole('ROLE_DESTROY')

- GET /tasks/definitions => hasRole('ROLE_VIEW')

- GET /tasks/definitions/* => hasRole('ROLE_VIEW')

# Task Executions

- GET /tasks/executions => hasRole('ROLE_VIEW')

- GET /tasks/executions/* => hasRole('ROLE_VIEW')

- POST /tasks/executions => hasRole('ROLE_DEPLOY')

- POST /tasks/executions/* => hasRole('ROLE_DEPLOY')

- DELETE /tasks/executions/* => hasRole('ROLE_DESTROY')

# Task Schedules

- GET /tasks/schedules => hasRole('ROLE_VIEW')

- GET /tasks/schedules/* => hasRole('ROLE_VIEW')

- GET /tasks/schedules/instances => hasRole('ROLE_VIEW')

- GET /tasks/schedules/instances/* => hasRole('ROLE_VIEW')

- POST /tasks/schedules => hasRole('ROLE_SCHEDULE')

- DELETE /tasks/schedules/* => hasRole('ROLE_SCHEDULE')

# Task Platform Account List */

- GET /tasks/platforms => hasRole('ROLE_VIEW')

# Task Validations

- GET /tasks/validation/ => hasRole('ROLE_VIEW')

- GET /tasks/validation/* => hasRole('ROLE_VIEW')

# Task Logs

- GET /tasks/logs/* => hasRole('ROLE_VIEW')

# Tools

- POST /tools/** => hasRole('ROLE_VIEW')The format of each line is the following:

HTTP_METHOD URL_PATTERN '=>' SECURITY_ATTRIBUTE

where:

-

HTTP_METHOD is one HTTP method (such as PUT or GET), capital case.

-

URL_PATTERN is an Ant-style URL pattern.

-

SECURITY_ATTRIBUTE is a SpEL expression. See Expression-Based Access Control.

-

Each of those is separated by one or whitespace characters (spaces, tabs, and so on).

Be mindful that the above is a YAML list, not a map (thus the use of '-' dashes

at the start of each line) that lives under the spring.cloud.dataflow.security.authorization.rules key.

Authorization — Shell and Dashboard Behavior

When security is enabled, the dashboard and the shell are role-aware, meaning that, depending on the assigned roles, not all functionality may be visible.

For instance, shell commands for which the user does not have the necessary roles are marked as unavailable.

|

Currently, the shell’s |

Conversely, for the Dashboard, the UI does not show pages or page elements for which the user is not authorized.

Securing the Spring Boot Management Endpoints

When security is enabled, the

Spring Boot HTTP Management Endpoints

are secured in the same way as the other REST endpoints. The management REST endpoints

are available under /management and require the MANAGEMENT role.

The default configuration in dataflow-server-defaults.yml is as follows:

management:

endpoints:

web:

base-path: /management

security:

roles: MANAGE| Currently, you should not customize the default management path. |

8.4. Setting up UAA Authentication

For local deployment scenarios, we recommend using the CloudFoundry User Account and Authentication (UAA) Server, which is OpenID certified. While the UAA is used by Cloud Foundry, it is also a fully featured stand alone OAuth2 server with enterprise features, such as LDAP integration.

Requirements

You need to check out, build and run UAA. To do so, make sure that you:

-

Use Java 8.

-

Have Git installed.

-

Have the CloudFoundry UAA Command Line Client installed.

-

Use a different host name for UAA when running on the same machine — for example,

uaa/.

If you run into issues installing uaac, you may have to set the GEM_HOME environment

variable:

export GEM_HOME="$HOME/.gem"You should also ensure that ~/.gem/gems/cf-uaac-4.2.0/bin has been added to your path.

Prepare UAA for JWT

As the UAA is an OpenID provider and uses JSON Web Tokens (JWT), it needs to have a private key for signing those JWTs:

openssl genrsa -out signingkey.pem 2048

openssl rsa -in signingkey.pem -pubout -out verificationkey.pem

export JWT_TOKEN_SIGNING_KEY=$(cat signingkey.pem)

export JWT_TOKEN_VERIFICATION_KEY=$(cat verificationkey.pem)Later, once the UAA is started, you can see the keys when you access uaa:8080/uaa/token_keys.

Here, the uaa in the URL uaa:8080/uaa/token_keys is the hostname.

|

Download and Start UAA

To download and install UAA, run the following commands:

git clone https://github.com/pivotal/uaa-bundled.git

cd uaa-bundled

./mvnw clean install

java -jar target/uaa-bundled-1.0.0.BUILD-SNAPSHOT.jarThe configuration of the UAA is driven by a YAML file uaa.yml, or you can script the configuration

using the UAA Command Line Client:

uaac target http://uaa:8080/uaa

uaac token client get admin -s adminsecret

uaac client add dataflow \

--name dataflow \

--secret dataflow \

--scope cloud_controller.read,cloud_controller.write,openid,password.write,scim.userids,sample.create,sample.view,dataflow.create,dataflow.deploy,dataflow.destroy,dataflow.manage,dataflow.modify,dataflow.schedule,dataflow.view \

--authorized_grant_types password,authorization_code,client_credentials,refresh_token \

--authorities uaa.resource,dataflow.create,dataflow.deploy,dataflow.destroy,dataflow.manage,dataflow.modify,dataflow.schedule,dataflow.view,sample.view,sample.create\

--redirect_uri http://localhost:9393/login \

--autoapprove openid

uaac group add "sample.view"

uaac group add "sample.create"

uaac group add "dataflow.view"

uaac group add "dataflow.create"

uaac user add springrocks -p mysecret --emails [email protected]

uaac user add vieweronly -p mysecret --emails [email protected]

uaac member add "sample.view" springrocks

uaac member add "sample.create" springrocks

uaac member add "dataflow.view" springrocks

uaac member add "dataflow.create" springrocks

uaac member add "sample.view" vieweronlyThe preceding script sets up the dataflow client as well as two users:

-

User springrocks has have both scopes:

sample.viewandsample.create. -

User vieweronly has only one scope:

sample.view.

Once added, you can quickly double-check that the UAA has the users created:

curl -v -d"username=springrocks&password=mysecret&client_id=dataflow&grant_type=password" -u "dataflow:dataflow" http://uaa:8080/uaa/oauth/token -d 'token_format=opaque'The preceding command should produce output similar to the following:

* Trying 127.0.0.1...

* TCP_NODELAY set

* Connected to uaa (127.0.0.1) port 8080 (#0)

* Server auth using Basic with user 'dataflow'

> POST /uaa/oauth/token HTTP/1.1

> Host: uaa:8080

> Authorization: Basic ZGF0YWZsb3c6ZGF0YWZsb3c=

> User-Agent: curl/7.54.0

> Accept: */*

> Content-Length: 97

> Content-Type: application/x-www-form-urlencoded

>

* upload completely sent off: 97 out of 97 bytes

< HTTP/1.1 200

< Cache-Control: no-store

< Pragma: no-cache

< X-XSS-Protection: 1; mode=block

< X-Frame-Options: DENY

< X-Content-Type-Options: nosniff

< Content-Type: application/json;charset=UTF-8

< Transfer-Encoding: chunked

< Date: Thu, 31 Oct 2019 21:22:59 GMT

<

* Connection #0 to host uaa left intact

{"access_token":"0329c8ecdf594ee78c271e022138be9d","token_type":"bearer","id_token":"eyJhbGciOiJSUzI1NiIsImprdSI6Imh0dHBzOi8vbG9jYWxob3N0OjgwODAvdWFhL3Rva2VuX2tleXMiLCJraWQiOiJsZWdhY3ktdG9rZW4ta2V5IiwidHlwIjoiSldUIn0.eyJzdWIiOiJlZTg4MDg4Ny00MWM2LTRkMWQtYjcyZC1hOTQ4MmFmNGViYTQiLCJhdWQiOlsiZGF0YWZsb3ciXSwiaXNzIjoiaHR0cDovL2xvY2FsaG9zdDo4MDkwL3VhYS9vYXV0aC90b2tlbiIsImV4cCI6MTU3MjYwMDE3OSwiaWF0IjoxNTcyNTU2OTc5LCJhbXIiOlsicHdkIl0sImF6cCI6ImRhdGFmbG93Iiwic2NvcGUiOlsib3BlbmlkIl0sImVtYWlsIjoic3ByaW5ncm9ja3NAc29tZXBsYWNlLmNvbSIsInppZCI6InVhYSIsIm9yaWdpbiI6InVhYSIsImp0aSI6IjAzMjljOGVjZGY1OTRlZTc4YzI3MWUwMjIxMzhiZTlkIiwiZW1haWxfdmVyaWZpZWQiOnRydWUsImNsaWVudF9pZCI6ImRhdGFmbG93IiwiY2lkIjoiZGF0YWZsb3ciLCJncmFudF90eXBlIjoicGFzc3dvcmQiLCJ1c2VyX25hbWUiOiJzcHJpbmdyb2NrcyIsInJldl9zaWciOiJlOTkyMDQxNSIsInVzZXJfaWQiOiJlZTg4MDg4Ny00MWM2LTRkMWQtYjcyZC1hOTQ4MmFmNGViYTQiLCJhdXRoX3RpbWUiOjE1NzI1NTY5Nzl9.bqYvicyCPB5cIIu_2HEe5_c7nSGXKw7B8-reTvyYjOQ2qXSMq7gzS4LCCQ-CMcb4IirlDaFlQtZJSDE-_UsM33-ThmtFdx--TujvTR1u2nzot4Pq5A_ThmhhcCB21x6-RNNAJl9X9uUcT3gKfKVs3gjE0tm2K1vZfOkiGhjseIbwht2vBx0MnHteJpVW6U0pyCWG_tpBjrNBSj9yLoQZcqrtxYrWvPHaa9ljxfvaIsOnCZBGT7I552O1VRHWMj1lwNmRNZy5koJFPF7SbhiTM8eLkZVNdR3GEiofpzLCfoQXrr52YbiqjkYT94t3wz5C6u1JtBtgc2vq60HmR45bvg","refresh_token":"6ee95d017ada408697f2d19b04f7aa6c-r","expires_in":43199,"scope":"scim.userids openid sample.create cloud_controller.read password.write cloud_controller.write sample.view","jti":"0329c8ecdf594ee78c271e022138be9d"}By using the token_format parameter, you can request the token to be either:

-

opaque

-

jwt

9. Configuration - Local

9.1. Feature Toggles

Spring Cloud Data Flow Server offers specific set of features that can be enabled/disabled when launching. These features include all the lifecycle operations and REST endpoints (server and client implementations, including the shell and the UI) for:

-

Streams (requires Skipper)

-

Tasks

-

Task Scheduler

One can enable and disable these features by setting the following boolean properties when launching the Data Flow server:

-

spring.cloud.dataflow.features.streams-enabled -

spring.cloud.dataflow.features.tasks-enabled -

spring.cloud.dataflow.features.schedules-enabled

By default, stream (requires Skipper), and tasks are enabled and Task Scheduler is disabled by default.

The REST /about endpoint provides information on the features that have been enabled and disabled.

9.2. Database

A relational database is used to store stream and task definitions as well as the state of executed tasks. Spring Cloud Data Flow provides schemas for MariaDB, MySQL, Oracle, PostgreSQL, Db2, SQL Server, and H2. The schema is automatically created when the server starts.

| The JDBC drivers for MariaDB, MySQL (via the MariaDB driver), PostgreSQL, SQL Server are available without additional configuration. To use any other database you need to put the corresponding JDBC driver jar on the classpath of the server as described here. |

To configure a database the following properties must be set:

-

spring.datasource.url -

spring.datasource.username -

spring.datasource.password -

spring.datasource.driver-class-name

The username and password are the same regardless of the database. However, the url and driver-class-name vary per database as follows.

| Database | spring.datasource.url | spring.datasource.driver-class-name | Driver included |

|---|---|---|---|

MariaDB 10.4+ |

jdbc:mariadb://${db-hostname}:${db-port}/${db-name} |

org.mariadb.jdbc.Driver |

Yes |

MySQL 5.7 |

jdbc:mysql://${db-hostname}:${db-port}/${db-name}?permitMysqlScheme |

org.mariadb.jdbc.Driver |

Yes |

MySQL 8.0+ |

jdbc:mysql://${db-hostname}:${db-port}/${db-name}?allowPublicKeyRetrieval=true&useSSL=false&autoReconnect=true&permitMysqlScheme[1] |

org.mariadb.jdbc.Driver |

Yes |

PostgresSQL |

jdbc:postgres://${db-hostname}:${db-port}/${db-name} |

org.postgresql.Driver |

Yes |

SQL Server |

jdbc:sqlserver://${db-hostname}:${db-port};databasename=${db-name}&encrypt=false |

com.microsoft.sqlserver.jdbc.SQLServerDriver |

Yes |

DB2 |

jdbc:db2://${db-hostname}:${db-port}/{db-name} |

com.ibm.db2.jcc.DB2Driver |

No |

Oracle |

jdbc:oracle:thin:@${db-hostname}:${db-port}/{db-name} |

oracle.jdbc.OracleDriver |

No |

9.2.1. H2

When no other database is configured then Spring Cloud Data Flow uses an embedded instance of the H2 database as the default.

| H2 is good for development purposes but is not recommended for production use nor is it supported as an external mode. |

9.2.2. Database configuration

When running locally, the database properties can be passed as environment variables or command-line arguments to the Data Flow Server. For example, to start the server with MariaDB using command line arguments execute the following command:

java -jar spring-cloud-dataflow-server/target/spring-cloud-dataflow-server-2.10.3.jar \

--spring.datasource.url=jdbc:mariadb://localhost:3306/mydb \

--spring.datasource.username=user \

--spring.datasource.password=pass \

--spring.datasource.driver-class-name=org.mariadb.jdbc.DriverLikewise, to start the server with MariaDB using environment variables execute the following command:

SPRING_DATASOURCE_URL=jdbc:mariadb://localhost:3306/mydb

SPRING_DATASOURCE_USERNAME=user

SPRING_DATASOURCE_PASSWORD=pass

SPRING_DATASOURCE_DRIVER_CLASS_NAME=org.mariadb.jdbc.Driver

java -jar spring-cloud-dataflow-server/target/spring-cloud-dataflow-server-2.10.3.jar9.2.3. Adding a Custom JDBC Driver

To add a custom driver for the database (for example, Oracle), you should rebuild the Data Flow Server and add the dependency to the Maven pom.xml file.

You need to modify the maven pom.xml of spring-cloud-dataflow-server module.

There are GA release tags in GitHub repository, so you can switch to desired GA tags to add the drivers on the production-ready codebase.

To add a custom JDBC driver dependency for the Spring Cloud Data Flow server:

-

Select the tag that corresponds to the version of the server you want to rebuild and clone the github repository.

-

Edit the spring-cloud-dataflow-server/pom.xml and, in the

dependenciessection, add the dependency for the database driver required. In the following example , an Oracle driver has been chosen:

<dependencies>

...

<dependency>

<groupId>com.oracle.jdbc</groupId>

<artifactId>ojdbc8</artifactId>

<version>12.2.0.1</version>

</dependency>

...

</dependencies>-

Build the application as described in Building Spring Cloud Data Flow

You can also provide default values when rebuilding the server by adding the necessary properties to the dataflow-server.yml file, as shown in the following example for PostgreSQL:

spring:

datasource:

url: jdbc:postgresql://localhost:5432/mydb

username: myuser

password: mypass

driver-class-name:org.postgresql.Driver9.2.4. Schema Handling

On default database schema is managed with Flyway which is convenient if it’s possible to give enough permissions to a database user.

Here’s a description what happens when Skipper server is started:

-

Flyway checks if

flyway_schema_historytable exists. -

Does a baseline(to version 1) if schema is not empty as Dataflow tables may be in place if a shared DB is used.

-

If schema is empty, flyway assumes to start from a scratch.

-

Goes through all needed schema migrations.

Here’s a description what happens when Dataflow server is started:

-

Flyway checks if

flyway_schema_history_dataflowtable exists. -

Does a baseline(to version 1) if schema is not empty as Skipper tables may be in place if a shared DB is used.

-

If schema is empty, flyway assumes to start from a scratch.

-

Goes through all needed schema migrations.

|

We have schema ddl’s in our source code

schemas

which can be used manually if Flyway is disabled by using configuration

|

9.3. Deployer Properties

You can use the following configuration properties of the Local deployer to customize how Streams and Tasks are deployed.

When deploying using the Data Flow shell, you can use the syntax deployer.<appName>.local.<deployerPropertyName>. See below for an example shell usage.

These properties are also used when configuring Local Task Platforms in the Data Flow server and local platforms in Skipper for deploying Streams.

| Deployer Property Name | Description | Default Value |

|---|---|---|

workingDirectoriesRoot |

Directory in which all created processes will run and create log files. |

java.io.tmpdir |

envVarsToInherit |

Array of regular expression patterns for environment variables that are passed to launched applications. |

<"TMP", "LANG", "LANGUAGE", "LC_.*", "PATH", "SPRING_APPLICATION_JSON"> on windows and <"TMP", "LANG", "LANGUAGE", "LC_.*", "PATH"> on Unix |

deleteFilesOnExit |

Whether to delete created files and directories on JVM exit. |

true |

javaCmd |

Command to run java |

java |

shutdownTimeout |

Max number of seconds to wait for app shutdown. |

30 |

javaOpts |

The Java Options to pass to the JVM, e.g -Dtest=foo |

<none> |

inheritLogging |

allow logging to be redirected to the output stream of the process that triggered child process. |

false |

debugPort |

Port for remote debugging |

<none> |

As an example, to set Java options for the time application in the ticktock stream, use the following stream deployment properties.

dataflow:> stream create --name ticktock --definition "time --server.port=9000 | log"

dataflow:> stream deploy --name ticktock --properties "deployer.time.local.javaOpts=-Xmx2048m -Dtest=foo"As a convenience, you can set the deployer.memory property to set the Java option -Xmx, as shown in the following example:

dataflow:> stream deploy --name ticktock --properties "deployer.time.memory=2048m"At deployment time, if you specify an -Xmx option in the deployer.<app>.local.javaOpts property in addition to a value of the deployer.<app>.local.memory option, the value in the javaOpts property has precedence. Also, the javaOpts property set when deploying the application has precedence over the Data Flow Server’s spring.cloud.deployer.local.javaOpts property.

9.4. Logging

Spring Cloud Data Flow local server is automatically configured to use RollingFileAppender for logging.

The logging configuration is located on the classpath contained in a file named logback-spring.xml.

By default, the log file is configured to use:

<property name="LOG_FILE" value="${LOG_FILE:-${LOG_PATH:-${LOG_TEMP:-${java.io.tmpdir:-/tmp}}}/spring-cloud-dataflow-server-local.log}"/>with the logback configuration for the RollingPolicy:

<appender name="FILE"

class="ch.qos.logback.core.rolling.RollingFileAppender">

<file>${LOG_FILE}</file>

<rollingPolicy

class="ch.qos.logback.core.rolling.SizeAndTimeBasedRollingPolicy">

<!-- daily rolling -->

<fileNamePattern>${LOG_FILE}.${LOG_FILE_ROLLING_FILE_NAME_PATTERN:-%d{yyyy-MM-dd}}.%i.gz</fileNamePattern>

<maxFileSize>${LOG_FILE_MAX_SIZE:-100MB}</maxFileSize>

<maxHistory>${LOG_FILE_MAX_HISTORY:-30}</maxHistory>

<totalSizeCap>${LOG_FILE_TOTAL_SIZE_CAP:-500MB}</totalSizeCap>

</rollingPolicy>

<encoder>

<pattern>${FILE_LOG_PATTERN}</pattern>

</encoder>

</appender>

To check the java.io.tmpdir for the current Spring Cloud Data Flow Server local server,

jinfo <pid> | grep "java.io.tmpdir"If you want to change or override any of the properties LOG_FILE, LOG_PATH, LOG_TEMP, LOG_FILE_MAX_SIZE, LOG_FILE_MAX_HISTORY and LOG_FILE_TOTAL_SIZE_CAP, please set them as system properties.

9.5. Streams

Data Flow Server delegates to the Skipper server the management of the Stream’s lifecycle. Set the configuration property spring.cloud.skipper.client.serverUri to the location of Skipper, e.g.

$ java -jar spring-cloud-dataflow-server-2.10.3.jar --spring.cloud.skipper.client.serverUri=https://192.51.100.1:7577/apiThe configuration of how streams are deployed and to which platforms, is done by configuration of platform accounts on the Skipper server.

See the documentation on platforms for more information.

9.6. Tasks

The Data Flow server is responsible for deploying Tasks.

Tasks that are launched by Data Flow write their state to the same database that is used by the Data Flow server.

For Tasks which are Spring Batch Jobs, the job and step execution data is also stored in this database.

As with streams launched by Skipper, Tasks can be launched to multiple platforms.

If no platform is defined, a platform named default is created using the default values of the class LocalDeployerProperties, which is summarized in the table Local Deployer Properties

To configure new platform accounts for the local platform, provide an entry under the spring.cloud.dataflow.task.platform.local section in your application.yaml file or via another Spring Boot supported mechanism.

In the following example, two local platform accounts named localDev and localDevDebug are created.

The keys such as shutdownTimeout and javaOpts are local deployer properties.

spring:

cloud:

dataflow:

task:

platform:

local:

accounts:

localDev:

shutdownTimeout: 60

javaOpts: "-Dtest=foo -Xmx1024m"

localDevDebug:

javaOpts: "-Xdebug -Xmx2048m"

By defining one platform as default allows you to skip using platformName where its use would otherwise be required.

|

When launching a task, pass the value of the platform account name using the task launch option --platformName If you do not pass a value for platformName, the value default will be used.

| When deploying a task to multiple platforms, the configuration of the task needs to connect to the same database as the Data Flow Server. |

You can configure the Data Flow server that is running locally to deploy tasks to Cloud Foundry or Kubernetes. See the sections on Cloud Foundry Task Platform Configuration and Kubernetes Task Platform Configuration for more information.

Detailed examples for launching and scheduling tasks across multiple platforms, are available in this section Multiple Platform Support for Tasks on dataflow.spring.io.

9.7. Security Configuration

9.7.1. CloudFoundry User Account and Authentication (UAA) Server

See the CloudFoundry User Account and Authentication (UAA) Server configuration section for details how to configure for local testing and development.

9.7.2. LDAP Authentication

LDAP Authentication (Lightweight Directory Access Protocol) is indirectly provided by Spring Cloud Data Flow using the UAA. The UAA itself provides comprehensive LDAP support.

|

While you may use your own OAuth2 authentication server, the LDAP support documented here requires using the UAA as authentication server. For any other provider, please consult the documentation for that particular provider. |

The UAA supports authentication against an LDAP (Lightweight Directory Access Protocol) server using the following modes:

|

When integrating with an external identity provider such as LDAP, authentication within the UAA becomes chained. UAA first attempts to authenticate with a user’s credentials against the UAA user store before the external provider, LDAP. For more information, see Chained Authentication in the User Account and Authentication LDAP Integration GitHub documentation. |

LDAP Role Mapping

The OAuth2 authentication server (UAA), provides comprehensive support for mapping LDAP groups to OAuth scopes.

The following options exist:

-

ldap/ldap-groups-null.xmlNo groups will be mapped -

ldap/ldap-groups-as-scopes.xmlGroup names will be retrieved from an LDAP attribute. E.g.CN -

ldap/ldap-groups-map-to-scopes.xmlGroups will be mapped to UAA groups using the external_group_mapping table

These values are specified via the configuration property ldap.groups.file controls. Under the covers

these values reference a Spring XML configuration file.

|

During test and development it might be necessary to make frequent changes to LDAP groups and users and see those reflected in the UAA. However, user information is cached for the duration of the login. The following script helps to retrieve the updated information quickly: |

9.7.3. Spring Security OAuth2 Resource/Authorization Server Sample

For local testing and development, you may also use the Resource and Authorization Server support provided by Spring Security. It allows you to easily create your own OAuth2 Server by configuring the SecurityFilterChain.

Samples can be found at: Spring Security Samples

9.7.4. Data Flow Shell Authentication

When using the Shell, the credentials can either be provided via username and password or by specifying a credentials-provider command. If your OAuth2 provider supports the Password Grant Type you can start the Data Flow Shell with:

$ java -jar spring-cloud-dataflow-shell-2.10.3.jar \

--dataflow.uri=http://localhost:9393 \ (1)

--dataflow.username=my_username \ (2)

--dataflow.password=my_password \ (3)

--skip-ssl-validation \ (4)| 1 | Optional, defaults to localhost:9393. |

| 2 | Mandatory. |

| 3 | If the password is not provided, the user is prompted for it. |

| 4 | Optional, defaults to false, ignores certificate errors (when using self-signed certificates). Use cautiously! |

| Keep in mind that when authentication for Spring Cloud Data Flow is enabled, the underlying OAuth2 provider must support the Password OAuth2 Grant Type if you want to use the Shell via username/password authentication. |

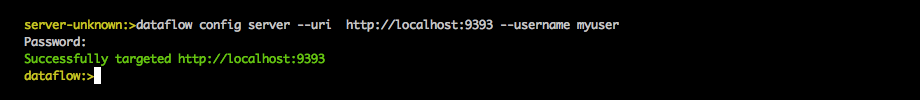

From within the Data Flow Shell you can also provide credentials by using the following command:

server-unknown:>dataflow config server \

--uri http://localhost:9393 \ (1)

--username myuser \ (2)

--password mysecret \ (3)

--skip-ssl-validation \ (4)| 1 | Optional, defaults to localhost:9393. |

| 2 | Mandatory.. |

| 3 | If security is enabled, and the password is not provided, the user is prompted for it. |

| 4 | Optional, ignores certificate errors (when using self-signed certificates). Use cautiously! |

The following image shows a typical shell command to connect to and authenticate a Data Flow Server:

Once successfully targeted, you should see the following output:

dataflow:>dataflow config info

dataflow config info

╔═══════════╤═══════════════════════════════════════╗

║Credentials│[username='my_username, password=****']║

╠═══════════╪═══════════════════════════════════════╣

║Result │ ║

║Target │http://localhost:9393 ║

╚═══════════╧═══════════════════════════════════════╝Alternatively, you can specify the credentials-provider command in order to

pass-in a bearer token directly, instead of providing a username and password.

This works from within the shell or by providing the

--dataflow.credentials-provider-command command-line argument when starting the Shell.

|

When using the credentials-provider command, please be aware that your specified command must return a Bearer token (Access Token prefixed with Bearer). For instance, in Unix environments the following simplistic command can be used: |

9.8. About API Configuration

The Spring Cloud Data Flow About Restful API result contains a display name, version, and, if specified, a URL for each of the major dependencies that comprise Spring Cloud Data Flow. The result (if enabled) also contains the sha1 and or sha256 checksum values for the shell dependency. The information that is returned for each of the dependencies is configurable by setting the following properties:

| Property Name | Description |

|---|---|

spring.cloud.dataflow.version-info.spring-cloud-dataflow-core.name |

Name to be used for the core |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-core.version |

Version to be used for the core |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-dashboard.name |

Name to be used for the dashboard |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-dashboard.version |

Version to be used for the dashboard |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-implementation.name |

Name to be used for the implementation |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-implementation.version |

Version to be used for the implementation |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-shell.name |

Name to be used for the shell |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-shell.version |

Version to be used for the shell |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-shell.url |

URL to be used for downloading the shell dependency |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-shell.checksum-sha1 |

Sha1 checksum value that is returned with the shell dependency info |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-shell.checksum-sha256 |

Sha256 checksum value that is returned with the shell dependency info |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-shell.checksum-sha1-url |

if |

spring.cloud.dataflow.version-info.spring-cloud-dataflow-shell.checksum-sha256-url |

if the |

9.8.1. Enabling Shell Checksum values

By default, checksum values are not displayed for the shell dependency. If

you need this feature enabled, set the

spring.cloud.dataflow.version-info.dependency-fetch.enabled property to true.

9.8.2. Reserved Values for URLs

There are reserved values (surrounded by curly braces) that you can insert into the URL that will make sure that the links are up to date:

-

repository: if using a build-snapshot, milestone, or release candidate of Data Flow, the repository refers to the repo-spring-io repository. Otherwise, it refers to Maven Central. -

version: Inserts the version of the jar/pom.

For example,

https://myrepository/org/springframework/cloud/spring-cloud-dataflow-shell/\{version}/spring-cloud-dataflow-shell-\{version}.jarproduces

https://myrepository/org/springframework/cloud/spring-cloud-dataflow-shell/2.1.4/spring-cloud-dataflow-shell-2.1.4.jarif you were using the 2.1.4 version of the Spring Cloud Data Flow Shell.

10. Configuration - Cloud Foundry

This section describes how to configure Spring Cloud Data Flow server’s features, such as security and which relational database to use. It also describes how to configure Spring Cloud Data Flow shell’s features.

10.1. Feature Toggles

Data Flow server offers a specific set of features that you can enable or disable when launching. These features include all the lifecycle operations and REST endpoints (server, client implementations including Shell and the UI) for:

-

Streams

-

Tasks

You can enable or disable these features by setting the following boolean properties when you launch the Data Flow server:

-

spring.cloud.dataflow.features.streams-enabled -

spring.cloud.dataflow.features.tasks-enabled

By default, all features are enabled.

The REST endpoint (/features) provides information on the enabled and disabled features.

10.2. Deployer Properties

You can use the following configuration properties of the Data Flow server’s Cloud Foundry deployer to customize how applications are deployed.

When deploying with the Data Flow shell, you can use the syntax deployer.<appName>.cloudfoundry.<deployerPropertyName>. See below for an example shell usage.

These properties are also used when configuring the Cloud Foundry Task platforms in the Data Flow server and and Kubernetes platforms in Skipper for deploying Streams.

| Deployer Property Name | Description | Default Value |

|---|---|---|

services |

The names of services to bind to the deployed application. |

<none> |

host |

The host name to use as part of the route. |

hostname derived by Cloud Foundry |

domain |

The domain to use when mapping routes for the application. |

<none> |

routes |

The list of routes that the application should be bound to. Mutually exclusive with host and domain. |

<none> |

buildpack |

The buildpack to use for deploying the application. Deprecated use buildpacks. |

|

buildpacks |

The list of buildpacks to use for deploying the application. |

|

memory |

The amount of memory to allocate. Default unit is mebibytes, 'M' and 'G" suffixes supported |

1024m |

disk |

The amount of disk space to allocate. Default unit is mebibytes, 'M' and 'G" suffixes supported. |

1024m |

healthCheck |

The type of health check to perform on deployed application. Values can be HTTP, NONE, PROCESS, and PORT |

PORT |

healthCheckHttpEndpoint |

The path that the http health check will use, |

/health |

healthCheckTimeout |

The timeout value for health checks in seconds. |

120 |

instances |

The number of instances to run. |

1 |

enableRandomAppNamePrefix |

Flag to enable prefixing the app name with a random prefix. |

true |

apiTimeout |

Timeout for blocking API calls, in seconds. |

360 |

statusTimeout |

Timeout for status API operations in milliseconds |

5000 |

useSpringApplicationJson |

Flag to indicate whether application properties are fed into |

true |

stagingTimeout |

Timeout allocated for staging the application. |

15 minutes |

startupTimeout |

Timeout allocated for starting the application. |

5 minutes |

appNamePrefix |

String to use as prefix for name of deployed application |

The Spring Boot property |

deleteRoutes |

Whether to also delete routes when un-deploying an application. |

true |

javaOpts |

The Java Options to pass to the JVM, e.g -Dtest=foo |

<none> |

pushTasksEnabled |

Whether to push task applications or assume that the application already exists when launched. |

true |

autoDeleteMavenArtifacts |

Whether to automatically delete Maven artifacts from the local repository when deployed. |

true |

env.<key> |

Defines a top level environment variable. This is useful for customizing Java build pack configuration which must be included as top level environment variables in the application manifest, as the Java build pack does not recognize |

The deployer determines if the app has Java CfEnv in its classpath. If so, it applies the required configuration. |

Here are some examples using the Cloud Foundry deployment properties:

-

You can set the buildpack that is used to deploy each application. For example, to use the Java offline buildback, set the following environment variable:

cf set-env dataflow-server SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_DEPLOYMENT_BUILDPACKS java_buildpack_offline-

Setting

buildpackis now deprecated in favour ofbuildpackswhich allows you to pass on more than one if needed. More about this can be found from How Buildpacks Work. -

You can customize the health check mechanism used by Cloud Foundry to assert whether apps are running by using the

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_DEPLOYMENT_HEALTH_CHECKenvironment variable. The current supported options arehttp(the default),port, andnone.

You can also set environment variables that specify the HTTP-based health check endpoint and timeout: SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_DEPLOYMENT_HEALTH_CHECK_ENDPOINT and SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_DEPLOYMENT_HEALTH_CHECK_TIMEOUT, respectively. These default to /health (the Spring Boot default location) and 120 seconds.

-

You can also specify deployment properties by using the DSL. For instance, if you want to set the allocated memory for the

httpapplication to 512m and also bind a postgres service to thejdbcapplication, you can run the following commands:

dataflow:> stream create --name postgresstream --definition "http | jdbc --tableName=names --columns=name"

dataflow:> stream deploy --name postgresstream --properties "deployer.http.memory=512, deployer.jdbc.cloudfoundry.services=postgres"|

You can configure these settings separately for stream and task apps. To alter settings for tasks,

substitute |

10.3. Tasks

The Data Flow server is responsible for deploying Tasks.

Tasks that are launched by Data Flow write their state to the same database that is used by the Data Flow server.

For Tasks which are Spring Batch Jobs, the job and step execution data is also stored in this database.

As with Skipper, Tasks can be launched to multiple platforms.

When Data Flow is running on Cloud Foundry, a Task platfom must be defined.

To configure new platform accounts that target Cloud Foundry, provide an entry under the spring.cloud.dataflow.task.platform.cloudfoundry section in your application.yaml file for via another Spring Boot supported mechanism.

In the following example, two Cloud Foundry platform accounts named dev and qa are created.

The keys such as memory and disk are Cloud Foundry Deployer Properties.

spring:

cloud:

dataflow:

task:

platform:

cloudfoundry:

accounts:

dev:

connection:

url: https://api.run.pivotal.io

org: myOrg

space: mySpace

domain: cfapps.io

username: [email protected]

password: drowssap

skipSslValidation: false

deployment:

memory: 512m

disk: 2048m

instances: 4

services: rabbit,postgres

appNamePrefix: dev1

qa:

connection:

url: https://api.run.pivotal.io

org: myOrgQA

space: mySpaceQA

domain: cfapps.io

username: [email protected]

password: drowssap

skipSslValidation: true

deployment:

memory: 756m

disk: 724m

instances: 2

services: rabbitQA,postgresQA

appNamePrefix: qa1

By defining one platform as default allows you to skip using platformName where its use would otherwise be required.

|

When launching a task, pass the value of the platform account name using the task launch option --platformName If you do not pass a value for platformName, the value default will be used.

| When deploying a task to multiple platforms, the configuration of the task needs to connect to the same database as the Data Flow Server. |

You can configure the Data Flow server that is on Cloud Foundry to deploy tasks to Cloud Foundry or Kubernetes. See the section on Kubernetes Task Platform Configuration for more information.

Detailed examples for launching and scheduling tasks across multiple platforms, are available in this section Multiple Platform Support for Tasks on dataflow.spring.io.

10.4. Application Names and Prefixes

To help avoid clashes with routes across spaces in Cloud Foundry, a naming strategy that provides a random prefix to a

deployed application is available and is enabled by default. You can override the default configurations

and set the respective properties by using cf set-env commands.

For instance, if you want to disable the randomization, you can override it by using the following command:

cf set-env dataflow-server SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_DEPLOYMENT_ENABLE_RANDOM_APP_NAME_PREFIX false10.5. Custom Routes

As an alternative to a random name or to get even more control over the hostname used by the deployed apps, you can use custom deployment properties, as the following example shows:

dataflow:>stream create foo --definition "http | log"

sdataflow:>stream deploy foo --properties "deployer.http.cloudfoundry.domain=mydomain.com,

deployer.http.cloudfoundry.host=myhost,

deployer.http.cloudfoundry.route-path=my-path"The preceding example binds the http app to the myhost.mydomain.com/my-path URL. Note that this

example shows all of the available customization options. In practice, you can use only one or two out of the three.

10.6. Docker Applications

Starting with version 1.2, it is possible to register and deploy Docker based apps as part of streams and tasks by using Data Flow for Cloud Foundry.

If you use Spring Boot and RabbitMQ-based Docker images, you can provide a common deployment property

to facilitate binding the apps to the RabbitMQ service. Assuming your RabbitMQ service is named rabbit, you can provide the following:

cf set-env dataflow-server SPRING_APPLICATION_JSON '{"spring.cloud.dataflow.applicationProperties.stream.spring.rabbitmq.addresses": "${vcap.services.rabbit.credentials.protocols.amqp.uris}"}'For Spring Cloud Task apps, you can use something similar to the following, if you use a database service instance named postgres:

cf set-env SPRING_DATASOURCE_URL '${vcap.services.postgres.credentials.jdbcUrl}'

cf set-env SPRING_DATASOURCE_USERNAME '${vcap.services.postgres.credentials.username}'

cf set-env SPRING_DATASOURCE_PASSWORD '${vcap.services.postgres.credentials.password}'

cf set-env SPRING_DATASOURCE_DRIVER_CLASS_NAME 'org.mariadb.jdbc.Driver'For non-Java or non-Boot applications, your Docker app must parse the VCAP_SERVICES variable in order to bind to any available services.

|

Passing application properties

When using non-Boot applications, chances are that you want to pass the application properties by using traditional

environment variables, as opposed to using the special |

10.7. Application-level Service Bindings

When deploying streams in Cloud Foundry, you can take advantage of application-specific service bindings, so not all services are globally configured for all the apps orchestrated by Spring Cloud Data Flow.

For instance, if you want to provide a postgres service binding only for the jdbc application in the following stream

definition, you can pass the service binding as a deployment property:

dataflow:>stream create --name httptojdbc --definition "http | jdbc"

dataflow:>stream deploy --name httptojdbc --properties "deployer.jdbc.cloudfoundry.services=postgresService"where postgresService is the name of the service specifically bound only to the jdbc application and the http

application does not get the binding by this method.

If you have more than one service to bind, they can be passed as comma-separated items

(for example: deployer.jdbc.cloudfoundry.services=postgresService,someService).

10.8. Configuring Service binding parameters

The CloudFoundry API supports providing configuration parameters when binding a service instance. Some service brokers require or recommend binding configuration. For example, binding the Google Cloud Platform service using the CF CLI looks something like:

cf bind-service my-app my-google-bigquery-example -c '{"role":"bigquery.user"}'Likewise the NFS Volume Service supports binding configuration such as:

cf bind-service my-app nfs_service_instance -c '{"uid":"1000","gid":"1000","mount":"/var/volume1","readonly":true}'Starting with version 2.0, Data Flow for Cloud Foundry allows you to provide binding configuration parameters may be provided in the app level or server level cloudfoundry.services deployment property. For example, to bind to the nfs service, as above :

dataflow:> stream deploy --name mystream --properties "deployer.<app>.cloudfoundry.services='nfs_service_instance uid:1000,gid:1000,mount:/var/volume1,readonly:true'"The format is intended to be compatible with the Data Flow DSL parser.

Generally, the cloudfoundry.services deployment property accepts a comma delimited value.

Since a comma is also used to separate configuration parameters, and to avoid white space issues, any item including configuration parameters must be enclosed in singe quotes. Valid values incude things like:

rabbitmq,'nfs_service_instance uid:1000,gid:1000,mount:/var/volume1,readonly:true',postgres,'my-google-bigquery-example role:bigquery.user'

Spaces are permitted within single quotes and = may be used instead of : to delimit key-value pairs.

|

10.9. User-provided Services

In addition to marketplace services, Cloud Foundry supports User-provided Services (UPS). Throughout this reference manual, regular services have been mentioned, but there is nothing precluding the use of User-provided Services as well, whether for use as the messaging middleware (for example, if you want to use an external Apache Kafka installation) or for use by some of the stream applications (for example, an Oracle Database).

Now we review an example of extracting and supplying the connection credentials from a UPS.

The following example shows a sample UPS setup for Apache Kafka:

cf create-user-provided-service kafkacups -p '{”brokers":"HOST:PORT","zkNodes":"HOST:PORT"}'The UPS credentials are wrapped within VCAP_SERVICES, and they can be supplied directly in the stream definition, as

the following example shows.

stream create fooz --definition "time | log"

stream deploy fooz --properties "app.time.spring.cloud.stream.kafka.binder.brokers=${vcap.services.kafkacups.credentials.brokers},app.time.spring.cloud.stream.kafka.binder.zkNodes=${vcap.services.kafkacups.credentials.zkNodes},app.log.spring.cloud.stream.kafka.binder.brokers=${vcap.services.kafkacups.credentials.brokers},app.log.spring.cloud.stream.kafka.binder.zkNodes=${vcap.services.kafkacups.credentials.zkNodes}"10.10. Database Connection Pool

As of Data Flow 2.0, the Spring Cloud Connector library is no longer used to create the DataSource. The library java-cfenv is now used which allows you to set Spring Boot properties to configure the connection pool.

10.11. Maximum Disk Quota

By default, every application in Cloud Foundry starts with 1G disk quota and this can be adjusted to a default maximum of 2G. The default maximum can also be overridden up to 10G by using Pivotal Cloud Foundry’s (PCF) Ops Manager GUI.

This configuration is relevant for Spring Cloud Data Flow because every task deployment is composed of applications (typically Spring Boot uber-jar’s), and those applications are resolved from a remote maven repository. After resolution, the application artifacts are downloaded to the local Maven Repository for caching and reuse. With this happening in the background, the default disk quota (1G) can fill up rapidly, especially when we experiment with streams that are made up of unique applications. In order to overcome this disk limitation and depending on your scaling requirements, you may want to change the default maximum from 2G to 10G. Let’s review the steps to change the default maximum disk quota allocation.

10.11.1. PCF’s Operations Manager

From PCF’s Ops Manager, select the “Pivotal Elastic Runtime” tile and navigate to the “Application Developer Controls” tab. Change the “Maximum Disk Quota per App (MB)” setting from 2048 (2G) to 10240 (10G). Save the disk quota update and click “Apply Changes” to complete the configuration override.

10.12. Scale Application

Once the disk quota change has been successfully applied and assuming you have a running application,

you can scale the application with a new disk_limit through the CF CLI, as the following example shows:

→ cf scale dataflow-server -k 10GB

Scaling app dataflow-server in org ORG / space SPACE as user...

OK

....

....

....

....

state since cpu memory disk details

#0 running 2016-10-31 03:07:23 PM 1.8% 497.9M of 1.1G 193.9M of 10GYou can then list the applications and see the new maximum disk space, as the following example shows:

→ cf apps

Getting apps in org ORG / space SPACE as user...

OK

name requested state instances memory disk urls

dataflow-server started 1/1 1.1G 10G dataflow-server.apps.io10.13. Managing Disk Use

Even when configuring the Data Flow server to use 10G of space, there is the possibility of exhausting

the available space on the local disk. To prevent this, jar artifacts downloaded from external sources, i.e., apps registered as http or maven resources, are automatically deleted whenever the application is deployed, whether or not the deployment request succeeds.

This behavior is optimal for production environments in which container runtime stability is more critical than I/O latency incurred during deployment.

In development environments deployment happens more frequently. Additionally, the jar artifact (or a lighter metadata jar) contains metadata describing application configuration properties

which is used by various operations related to application configuration, more frequently performed during pre-production activities (see Application Metadata for details).

To provide a more responsive interactive developer experience at the expense of more disk usage in pre-production environments, you can set the CloudFoundry deployer property autoDeleteMavenArtifacts to false.

If you deploy the Data Flow server by using the default port health check type, you must explicitly monitor the disk space on the server in order to avoid running out space.

If you deploy the server by using the http health check type (see the next example), the Data Flow server is restarted if there is low disk space.

This is due to Spring Boot’s Disk Space Health Indicator.

You can configure the settings of the Disk Space Health Indicator by using the properties that have the management.health.diskspace prefix.

For version 1.7, we are investigating the use of Volume Services for the Data Flow server to store .jar artifacts before pushing them to Cloud Foundry.

The following example shows how to deploy the http health check type to an endpoint called /management/health:

---

...

health-check-type: http

health-check-http-endpoint: /management/health10.14. Application Resolution Alternatives

Though we recommend using a Maven Artifactory for application Register a Stream Application, there might be situations where one of the following alternative approaches would make sense.

-

With the help of Spring Boot, we can serve static content in Cloud Foundry. A simple Spring Boot application can bundle all the required stream and task applications. By having it run on Cloud Foundry, the static application can then serve the über-jar’s. From the shell, you can, for example, register the application with the name

http-source.jarby using--uri=http://<Route-To-StaticApp>/http-source.jar. -

The über-jar’s can be hosted on any external server that’s reachable over HTTP. They can be resolved from raw GitHub URIs as well. From the shell, you can, for example, register the app with the name

http-source.jarby using--uri=http://<Raw_GitHub_URI>/http-source.jar. -

Static Buildpack support in Cloud Foundry is another option. A similar HTTP resolution works on this model, too.

-

Volume Services is another great option. The required über-jars can be hosted in an external file system. With the help of volume-services, you can, for example, register the application with the name

http-source.jarby using--uri=file://<Path-To-FileSystem>/http-source.jar.

10.15. Security

By default, the Data Flow server is unsecured and runs on an unencrypted HTTP connection. You can secure your REST endpoints

(as well as the Data Flow Dashboard) by enabling HTTPS and requiring clients to authenticate.

For more details about securing the

REST endpoints and configuring to authenticate against an OAUTH backend (UAA and SSO running on Cloud Foundry),

see the security section from the core Security Configuration. You can configure the security details in dataflow-server.yml or pass them as environment variables through cf set-env commands.

10.15.1. Authentication

Spring Cloud Data Flow can either integrate with Pivotal Single Sign-On Service (for example, on PWS) or Cloud Foundry User Account and Authentication (UAA) Server.

Pivotal Single Sign-On Service

When deploying Spring Cloud Data Flow to Cloud Foundry, you can bind the application to the Pivotal Single Sign-On Service. By doing so, Spring Cloud Data Flow takes advantage of the Java CFEnv, which provides Cloud Foundry-specific auto-configuration support for OAuth 2.0.

To do so, bind the Pivotal Single Sign-On Service to your Data Flow Server application and provide the following properties:

SPRING_CLOUD_DATAFLOW_SECURITY_CFUSEUAA: false (1)

SECURITY_OAUTH2_CLIENT_CLIENTID: "${security.oauth2.client.clientId}"

SECURITY_OAUTH2_CLIENT_CLIENTSECRET: "${security.oauth2.client.clientSecret}"

SECURITY_OAUTH2_CLIENT_ACCESSTOKENURI: "${security.oauth2.client.accessTokenUri}"

SECURITY_OAUTH2_CLIENT_USERAUTHORIZATIONURI: "${security.oauth2.client.userAuthorizationUri}"

SECURITY_OAUTH2_RESOURCE_USERINFOURI: "${security.oauth2.resource.userInfoUri}"| 1 | It is important that the property spring.cloud.dataflow.security.cf-use-uaa is set to false |

Authorization is similarly supported for non-Cloud Foundry security scenarios. See the security section from the core Data Flow Security Configuration.

As the provisioning of roles can vary widely across environments, we by default assign all Spring Cloud Data Flow roles to users.

You can customize this behavior by providing your own AuthoritiesExtractor.

The following example shows one possible approach to set the custom AuthoritiesExtractor on the UserInfoTokenServices:

public class MyUserInfoTokenServicesPostProcessor

implements BeanPostProcessor {

@Override

public Object postProcessBeforeInitialization(Object bean, String beanName) {

if (bean instanceof UserInfoTokenServices) {

final UserInfoTokenServices userInfoTokenServices == (UserInfoTokenServices) bean;

userInfoTokenServices.setAuthoritiesExtractor(ctx.getBean(AuthoritiesExtractor.class));

}

return bean;

}

@Override

public Object postProcessAfterInitialization(Object bean, String beanName) {

return bean;

}

}Then you can declare it in your configuration class as follows:

@Bean

public BeanPostProcessor myUserInfoTokenServicesPostProcessor() {

BeanPostProcessor postProcessor == new MyUserInfoTokenServicesPostProcessor();

return postProcessor;

}Cloud Foundry UAA

The availability of Cloud Foundry User Account and Authentication (UAA) depends on the Cloud Foundry environment.

In order to provide UAA integration, you have to provide the necessary

OAuth2 configuration properties (for example, by setting the SPRING_APPLICATION_JSON

property).

The following JSON example shows how to create a security configuration:

{

"security.oauth2.client.client-id": "scdf",

"security.oauth2.client.client-secret": "scdf-secret",

"security.oauth2.client.access-token-uri": "https://login.cf.myhost.com/oauth/token",

"security.oauth2.client.user-authorization-uri": "https://login.cf.myhost.com/oauth/authorize",

"security.oauth2.resource.user-info-uri": "https://login.cf.myhost.com/userinfo"

}By default, the spring.cloud.dataflow.security.cf-use-uaa property is set to true. This property activates a special

AuthoritiesExtractor called CloudFoundryDataflowAuthoritiesExtractor.

If you do not use CloudFoundry UAA, you should set spring.cloud.dataflow.security.cf-use-uaa to false.

Under the covers, this AuthoritiesExtractor calls out to the

Cloud Foundry

Apps API and ensure that users are in fact Space Developers.

If the authenticated user is verified as a Space Developer, all roles are assigned.

10.16. Configuration Reference

You must provide several pieces of configuration. These are Spring Boot @ConfigurationProperties, so you can set

them as environment variables or by any other means that Spring Boot supports. The following listing is in environment

variable format, as that is an easy way to get started configuring Boot applications in Cloud Foundry.

Note that in the future, you will be able to deploy tasks to multiple platforms, but for 2.0.0.M1 you can deploy only to a single platform and the name must be default.

# Default values appear after the equal signs.

# Example values, typical for Pivotal Web Services, are included as comments.

# URL of the CF API (used when using cf login -a for example) - for example, https://api.run.pivotal.io

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_CONNECTION_URL=

# The name of the organization that owns the space above - for example, youruser-org

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_CONNECTION_ORG=

# The name of the space into which modules will be deployed - for example, development

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_CONNECTION_SPACE=

# The root domain to use when mapping routes - for example, cfapps.io

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_CONNECTION_DOMAIN=

# The user name and password of the user to use to create applications

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_CONNECTION_USERNAME=

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_CONNECTION_PASSWORD

# The identity provider to be used when accessing the Cloud Foundry API (optional).

# The passed string has to be a URL-Encoded JSON Object, containing the field origin with value as origin_key of an identity provider - for example, {"origin":"uaa"}

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_CONNECTION_LOGIN_HINT=

# Whether to allow self-signed certificates during SSL validation (you should NOT do so in production)

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_CONNECTION_SKIP_SSL_VALIDATION

# A comma-separated set of service instance names to bind to every deployed task application.

# Among other things, this should include an RDBMS service that is used

# for Spring Cloud Task execution reporting, such as my_postgres

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_DEPLOYMENT_SERVICES

spring.cloud.deployer.cloudfoundry.task.services=

# Timeout, in seconds, to use when doing blocking API calls to Cloud Foundry

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_DEPLOYMENT_API_TIMEOUT=

# Timeout, in milliseconds, to use when querying the Cloud Foundry API to compute app status

SPRING_CLOUD_DATAFLOW_TASK_PLATFORM_CLOUDFOUNDRY_ACCOUNTS[default]_DEPLOYMENT_STATUS_TIMEOUTNote that you can set spring.cloud.deployer.cloudfoundry.services,

spring.cloud.deployer.cloudfoundry.buildpacks, or the Spring Cloud Deployer-standard

spring.cloud.deployer.memory and spring.cloud.deployer.disk

as part of an individual deployment request by using the deployer.<app-name> shortcut, as the following example shows:

stream create --name ticktock --definition "time | log"